Somewhere around the 30-employee mark, a company decides it needs a data warehouse. The reasoning is sound: customer data lives in eight different tools, nobody trusts any single one, and leadership wants dashboards. So engineering spins up Snowflake or BigQuery, sets up Fivetran to ingest everything, builds some dbt models, and six weeks later the CEO gets a dashboard in Looker.

The dashboards are nice. But six months in, marketing is still complaining that Mailchimp has stale segments. Sales is still manually updating HubSpot with billing data they pulled from Stripe. Support still can’t see whether a customer is on a paid plan without switching tabs. The warehouse didn’t fix any of that.

I’ve watched this play out at four different companies over the past few years, and the disappointment follows the same arc. Not because warehouses are bad, but because people expect them to solve a problem they were never designed for.

Warehouses Are Read Infrastructure

A data warehouse is optimized for one thing: querying large volumes of historical data. It’s columnar storage with a SQL interface. Ingestion tools like Fivetran and Stitch pull data in, transformation tools like dbt reshape it, and BI tools like Looker and Metabase query it. The entire pipeline flows in one direction: from your operational tools into the warehouse.

This is genuinely useful for analytics. If you want to know how many customers churned last quarter, or which marketing channel drives the highest LTV, the warehouse is the right answer. Build the model, write the query, put it on a dashboard. That workflow works well.

The problem shows up when you try to push data back out. Your support team doesn’t query Snowflake. They use Zendesk. Your marketing team doesn’t write SQL. They build segments in Klaviyo. For the warehouse to actually improve how these teams work, you need to get the data back into their tools — which means reverse ETL.

Reverse ETL tools like Census and Hightouch exist to solve exactly this. They query your warehouse on a schedule and push the results to downstream tools. On paper, this closes the loop. In practice, it adds another layer of infrastructure, another sync schedule, another set of field mappings to maintain, and another place where data can break silently.

I’m not saying reverse ETL doesn’t work. It does. But the total architecture you need to keep your SaaS tools in sync via the warehouse route is: ingestion tool, warehouse, transformation layer, reverse ETL tool, and monitoring for all four. That’s a lot of moving parts for “I want my support team to see billing data.”

The Latency Problem Nobody Budgets For

Here’s something that doesn’t come up enough in warehouse architecture discussions: latency.

Most ingestion tools run on a schedule. Fivetran’s standard sync frequency is six hours on their base plan. Faster syncs cost more. Then dbt models run on their own schedule, maybe hourly, maybe daily. Then the reverse ETL tool runs on its schedule. By the time a change in Stripe reaches your CRM, it could be 12-18 hours old.

For analytics, this is fine. Your quarterly churn rate doesn’t change minute by minute. But for operational use cases, stale data causes real problems. A customer upgrades their plan and calls support an hour later. The support agent sees the old plan because the warehouse pipeline hasn’t caught up yet. The customer has to explain what happened. The agent has to check Stripe manually.

You could throw money at the problem by paying for faster sync frequencies across the entire pipeline. Some teams do. But you’re optimizing a fundamentally batch-oriented architecture for near-real-time behavior, and it always feels like you’re fighting the grain.

I worked at a company that spent three months building what they called the “golden record” in their warehouse. Every customer attribute from every tool, merged and deduplicated into one canonical row. Beautiful dbt model, well-tested, fully documented. Then someone asked “can we push this to Intercom so support can see it?” and the answer was “yes, but on a 4-hour delay.” Half the value disappeared right there.

What the Problem Actually Is

The reason the warehouse approach feels like overkill for keeping tools in sync is that it is overkill. You’re routing data through an analytical system to solve an operational problem. It’s like driving to the airport to get to the coffee shop across the street.

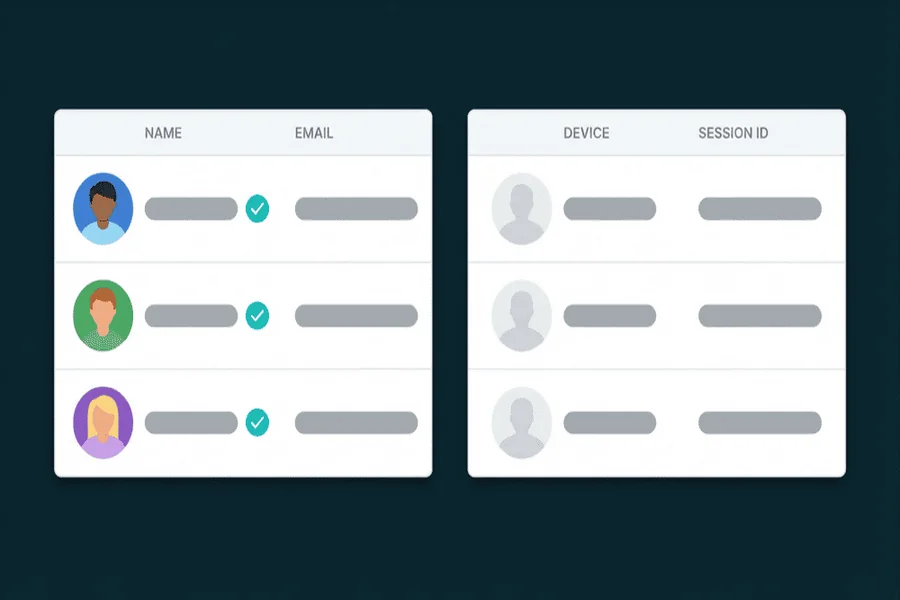

The core issue most teams face is not “we can’t query our data.” It’s “our tools don’t agree about our customers.” HubSpot has one email for a contact, Intercom has another, Stripe has a third. The billing plan in the CRM doesn’t match what’s in the payment system. A customer’s company name is spelled three different ways across four tools.

This is a synchronization problem, not an analytics problem. What you need is infrastructure that keeps records consistent across tools in something close to real time, handles conflicts when two tools disagree, and tracks what changed and why. A customer data platform built around sync rather than around queries is a more natural fit here.

The warehouse-centric approach became the default partly because the tooling matured first. Snowflake, dbt, Fivetran, and Looker became the “modern data stack” around 2019-2020 and soaked up most of the attention. The operational side of customer data management caught up later, and a lot of teams had already committed to the warehouse path by then.

When the Warehouse Is Worth It (And When It Isn’t)

I don’t think warehouses are a mistake. If you have a data team, if you run complex analytical queries, if you need to join data across systems for reporting purposes, a warehouse is the right tool. What I’d push back on is treating the warehouse as your customer data strategy.

If your primary goal is keeping your SaaS tools in sync so that every team works with current, consistent data, the warehouse adds complexity without directly solving that problem. You end up maintaining a pipeline that exists mostly to shuttle data through a system designed for something else.

A useful litmus test: if you removed your warehouse, would your customer-facing teams lose access to the data they need to do their jobs? If yes, the warehouse is serving an operational function and you should think about whether it’s the right architecture for that. If no, the warehouse is doing its actual job (analytics) and the operational sync problem needs its own solution.

Most teams I talk to fall into the first category. They built the warehouse for analytics but ended up depending on it for operational data distribution because it was the only system that had everything in one place. That dependency wasn’t planned. It just happened because nobody built the operational layer deliberately.

Build the Operational Layer First

If I were starting over at a 20-person company today, I’d set up the operational sync layer before even thinking about a warehouse. Get your tools talking to each other directly. Make sure your CRM knows about billing changes within minutes, not hours. Make sure your support tool shows the data your support team needs without them switching tabs.

Then, later, if you need analytics that span multiple systems, add the warehouse. At that point it can focus on what it’s good at (historical analysis, complex joins, dashboards) instead of also being responsible for keeping your Mailchimp segments up to date.

This order matters because the operational problems hurt first and hurt more visibly. A support agent working with stale data creates a bad customer experience today. A missing dashboard is an inconvenience. But the industry defaults to building the analytical layer first, probably because “we need a data warehouse” is an easier pitch to engineering leadership than “we need to keep our tools in sync.” One sounds like infrastructure investment. The other sounds like glue work that nobody wants to own.

Anyway, this isn’t really about warehouses versus other tools. It’s about recognizing that querying your data and distributing your data are two different problems that happen to involve the same data. Solving one doesn’t automatically solve the other, and pretending it does is how you end up with a $30k/year warehouse bill and a support team that still can’t see which plan a customer is on.